Hadoop FullyDistributed Mode 完全分布式

| spark101 | spark102 | spark103 |

|---|---|---|

| 192.168.171.101 | 192.168.171.102 | 192.168.171.103 |

| namenode | namenode | |

| journalnode | journalnode | journalnode |

| datanode | datanode | datanode |

| nodemanager | nodemanager | nodemanager |

| recource manager | recource manager | |

| job history | ||

| job log | job log | job log |

1. 准备

1.1 升级操作系统和软件

yum -y update

升级后建议重启

1.2 安装常用软件

yum -y install gcc gcc-c++ autoconf automake cmake make rsync vim man zip unzip net-tools zlib zlib-devel openssl openssl-devel pcre-devel tcpdump lrzsz tar wget openssh-server

1.3 修改主机名

hostnamectl set-hostname spark01

hostnamectl set-hostname spark02

hostnamectl set-hostname spark03

1.4 修改IP地址

vim /etc/sysconfig/network-scripts/ifcfg-ens160

网卡 配置文件示例

TYPE="Ethernet" PROXY_METHOD="none" BROWSER_ONLY="no" BOOTPROTO="none" DEFROUTE="yes" IPV4_FAILURE_FATAL="no" IPV6INIT="yes" IPV6_AUTOCONF="yes" IPV6_DEFROUTE="yes" IPV6_FAILURE_FATAL="no" IPV6_ADDR_GEN_MODE="stable-privacy" NAME="ens32" DEVICE="ens32" ONBOOT="yes" IPADDR="192.168.171.101" PREFIX="24" GATEWAY="192.168.171.2" DNS1="192.168.171.2" IPV6_PRIVACY="no"

1.5 关闭防火墙

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/configsetenforce 0 systemctl stop firewalld systemctl disable firewalld

1.6 修改hosts配置文件

vim /etc/hosts

修改内容如下:

192.168.171.101 spark01 192.168.171.102 spark02 192.168.171.103 spark03

1.7 上传软件配置环境变量

在所有主机节点创建软件目录

mkdir -p /opt/soft

以下操作在 hadoop101 主机上完成

进入软件目录

cd /opt/soft

下载 JDK

wget https://download.oracle.com/otn/java/jdk/8u391-b13/b291ca3e0c8548b5a51d5a5f50063037/jdk-8u391-linux-x64.tar.gz?AuthParam=1698206552_11c0bb831efdf87adfd187b0e4ccf970

下载 zookeeper

wget https://dlcdn.apache.org/zookeeper/zookeeper-3.8.3/apache-zookeeper-3.8.3-bin.tar.gz

下载 hadoop

wget https://dlcdn.apache.org/hadoop/common/hadoop-3.3.5/hadoop-3.3.5.tar.gz

解压 JDK 修改名称

解压 zookeeper 修改名称

解压 hadoop 修改名称

tar -zxvf jdk-8u391-linux-x64.tar.gz -C /opt/soft/ mv jdk1.8.0_391/ jdk-8 tar -zxvf apache-zookeeper-3.8.3-bin.tar.gz mv apache-zookeeper-3.8.3-bin zookeeper-3 tar -zxvf hadoop-3.3.5.tar.gz -C /opt/soft/ mv hadoop-3.3.5/ hadoop-3

配置环境变量

vim /etc/profile.d/my_env.sh

编写以下内容:

export JAVA_HOME=/opt/soft/jdk-8 # export set JAVA_OPTS="--add-opens java.base/java.lang=ALL-UNNAMED" export ZOOKEEPER_HOME=/opt/soft/zookeeper-3 export HDFS_NAMENODE_USER=root export HDFS_SECONDARYNAMENODE_USER=root export HDFS_DATANODE_USER=root export HDFS_ZKFC_USER=root export HDFS_JOURNALNODE_USER=root export HADOOP_SHELL_EXECNAME=root export YARN_RESOURCEMANAGER_USER=root export YARN_NODEMANAGER_USER=root export HADOOP_HOME=/opt/soft/hadoop-3 export HADOOP_INSTALL=$HADOOP_HOME export HADOOP_MAPRED_HOME=$HADOOP_HOME export HADOOP_COMMON_HOME=$HADOOP_HOME export HADOOP_HDFS_HOME=$HADOOP_HOME export YARN_HOME=$HADOOP_HOME export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop export PATH=$PATH:$JAVA_HOME/bin:$ZOOKEEPER_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

生成新的环境变量

注意:分发软件和配置文件后 在所有主机执行该步骤

source /etc/profile

2. zookeeper

2.1 编辑配置文件

cd $ZOOKEEPER_HOME/conf

vim zoo.cfg

# 心跳单位,2s tickTime=2000 # zookeeper-3初始化的同步超时时间,10个心跳单位,也即20s initLimit=10 # 普通同步:发送一个请求并得到响应的超时时间,5个心跳单位也即10s syncLimit=5 # 内存快照数据的存储位置 dataDir=/home/zookeeper-3/data # 事务日志的存储位置 dataLogDir=/home/zookeeper-3/datalog # 当前zookeeper-3节点的端口 clientPort=2181 # 单个客户端到集群中单个节点的并发连接数,通过ip判断是否同一个客户端,默认60 maxClientCnxns=1000 # 保留7个内存快照文件在dataDir中,默认保留3个 autopurge.snapRetainCount=7 # 清除快照的定时任务,默认1小时,如果设置为0,标识关闭清除任务 autopurge.purgeInterval=1 #允许客户端连接设置的最小超时时间,默认2个心跳单位 minSessionTimeout=4000 #允许客户端连接设置的最大超时时间,默认是20个心跳单位,也即40s, maxSessionTimeout=300000 #zookeeper-3 3.5.5启动默认会把AdminService服务启动,这个服务默认是8080端口 admin.serverPort=9001 #集群地址配置 server.1=spark01:2888:3888 server.2=spark02:2888:3888 server.3=spark03:2888:3888

tickTime=2000 initLimit=10 syncLimit=5 dataDir=/home/zookeeper-3/data dataLogDir=/home/zookeeper-3/datalog clientPort=2181 maxClientCnxns=1000 autopurge.snapRetainCount=7 autopurge.purgeInterval=1 minSessionTimeout=4000 maxSessionTimeout=300000 admin.serverPort=9001 server.1=spark01:2888:3888 server.2=spark02:2888:3888 server.3=spark03:2888:3888

2.2 保存后根据配置文件创建目录

在每台服务器上执行

mkdir -p /home/zookeeper-3/data mkdir -p /home/zookeeper-3/datalog

2.3 myid

spark01

echo 1 > /home/zookeeper-3/data/myid more /home/zookeeper-3/data/myid

spark02

echo 2 > /home/zookeeper-3/data/myid more /home/zookeeper-3/data/myid

spark03

echo 3 > /home/zookeeper-3/data/myid more /home/zookeeper-3/data/myid

2.4 编写zookeeper-3开机启动脚本

在/etc/systemd/system/文件夹下创建一个启动脚本zookeeper-3.service

注意:在每台服务器上编写

cd /etc/systemd/system vim zookeeper.service

内容如下:

[Unit] Description=zookeeper After=syslog.target network.target [Service] Type=forking # 指定zookeeper-3 日志文件路径,也可以在zkServer.sh 中定义 Environment=ZOO_LOG_DIR=/home/zookeeper-3/datalog # 指定JDK路径,也可以在zkServer.sh 中定义 Environment=JAVA_HOME=/opt/soft/jdk-8 ExecStart=/opt/soft/zookeeper-3/bin/zkServer.sh start ExecStop=/opt/soft/zookeeper-3/bin/zkServer.sh stop Restart=always User=root Group=root [Install] WantedBy=multi-user.target

[Unit] Description=zookeeper After=syslog.target network.target [Service] Type=forking Environment=ZOO_LOG_DIR=/home/zookeeper-3/datalog Environment=JAVA_HOME=/opt/soft/jdk-8 ExecStart=/opt/soft/zookeeper-3/bin/zkServer.sh start ExecStop=/opt/soft/zookeeper-3/bin/zkServer.sh stop Restart=always User=root Group=root [Install] WantedBy=multi-user.target

systemctl daemon-reload # 等所有主机配置好后再执行以下命令 systemctl start zookeeper systemctl enable zookeeper systemctl status zookeeper

3. hadoop

修改配置文件

cd $HADOOP_HOME/etc/hadoop

- hadoop-env.sh

- core-site.xml

- hdfs-site.xml

- workers

- mapred-site.xml

- yarn-site.xml

hadoop-env.sh 文件末尾追加

export JAVA_HOME=/opt/soft/jdk-8 # export HADOOP_OPTS="--add-opens java.base/java.lang=ALL-UNNAMED" export HDFS_NAMENODE_USER=root export HDFS_SECONDARYNAMENODE_USER=root export HDFS_DATANODE_USER=root export HDFS_ZKFC_USER=root export HDFS_JOURNALNODE_USER=root export HADOOP_SHELL_EXECNAME=root export YARN_RESOURCEMANAGER_USER=root export YARN_NODEMANAGER_USER=root

core-site.xml

fs.defaultFS hdfs://lihaozhe hadoop.tmp.dir /home/hadoop/data ha.zookeeper.quorum spark01:2181,spark02:2181,spark03:2181 hadoop.http.staticuser.user root dfs.permissions.enabled false hadoop.proxyuser.root.hosts * hadoop.proxyuser.root.groups * hadoop.proxyuser.root.users *

hdfs-site.xml

dfs.nameservices lihaozhe dfs.ha.namenodes.lihaozhe nn1,nn2 dfs.namenode.rpc-address.lihaozhe.nn1 spark01:8020 dfs.namenode.rpc-address.lihaozhe.nn2 spark02:8020 dfs.namenode.http-address.lihaozhe.nn1 spark01:9870 dfs.namenode.http-address.lihaozhe.nn2 spark02:9870 dfs.namenode.shared.edits.dir qjournal://spark01:8485;spark02:8485;spark03:8485/lihaozhe dfs.client.failover.proxy.provider.lihaozhe org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider dfs.ha.fencing.methods sshfence dfs.ha.fencing.ssh.private-key-files /root/.ssh/id_rsa dfs.journalnode.edits.dir /home/hadoop/journalnode/data dfs.ha.automatic-failover.enabled true dfs.safemode.threshold.pct 1

workers

spark01 spark02 spark03

mapred-site.xml

mapreduce.framework.name yarn mapreduce.application.classpath $HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/* mapreduce.jobhistory.address spark01:10020 mapreduce.jobhistory.webapp.address spark01:19888

yarn-site.xml

yarn.resourcemanager.ha.enabled true yarn.resourcemanager.cluster-id cluster1 yarn.resourcemanager.ha.rm-ids rm1,rm2 yarn.resourcemanager.hostname.rm1 spark01 yarn.resourcemanager.hostname.rm2 spark02 yarn.resourcemanager.webapp.address.rm1 spark01:8088 yarn.resourcemanager.webapp.address.rm2 spark02:8088 yarn.resourcemanager.zk-address spark01:2181,spark02:2181,spark03:2181 yarn.nodemanager.aux-services mapreduce_shuffle yarn.nodemanager.aux-services.mapreduce.shuffle.class org.apache.hadoop.mapred.ShuffleHandler yarn.nodemanager.env-whitelist JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME yarn.nodemanager.pmem-check-enabled false yarn.nodemanager.vmem-check-enabled false yarn.log-aggregation-enable true yarn.log.server.url http://spark01:19888/jobhistory/logs yarn.log-aggregation.retain-seconds 604800

4. 配置ssh免密钥登录

创建本地秘钥并将公共秘钥写入认证文件

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

ssh-copy-id root@spark01

ssh-copy-id root@spark02

ssh-copy-id root@spark03

ssh root@spark01 exit

ssh root@spark02 exit

ssh root@spark03 exit

5. 分发软件和配置文件

scp -r /etc/profile.d root@spark02:/etc scp -r /etc/profile.d root@spark03:/etc scp -r /opt/soft/zookeeper-3 root@spark02:/opt/soft scp -r /opt/soft/zookeeper-3 root@spark03:/opt/soft scp -r /opt/soft/hadoop-3/etc/hadoop/* root@spark02:/opt/soft/hadoop-3/etc/hadoop/ scp -r /opt/soft/hadoop-3/etc/hadoop/* root@spark03:/opt/soft/hadoop-3/etc/hadoop/

6. 在各服务器上使环境变量生效

source /etc/profile

7. 启动zookeeper

7.1 myid

spark01

echo 1 > /home/zookeeper-3/data/myid more /home/zookeeper-3/data/myid

spark02

echo 2 > /home/zookeeper-3/data/myid more /home/zookeeper-3/data/myid

spark03

echo 3 > /home/zookeeper-3/data/myid more /home/zookeeper-3/data/myid

7.2 启动服务

在各节点执行以下命令

systemctl daemon-reload systemctl start zookeeper systemctl enable zookeeper systemctl status zookeeper

7.3 验证

jps

zkServer.sh status

8. Hadoop初始化

1. 启动三个zookeeper:zkServer.sh start

2. 启动三个JournalNode:

hadoop-daemon.sh start journalnode 或者 hdfs --daemon start journalnode

3. 在其中一个namenode上格式化:hdfs namenode -format

4. 把刚刚格式化之后的元数据拷贝到另外一个namenode上

a) 启动刚刚格式化的namenode :

hadoop-daemon.sh start namenode 或者 hdfs --daemon start namenode

b) 在没有格式化的namenode上执行:hdfs namenode -bootstrapStandby

c) 启动第二个namenode:

hadoop-daemon.sh start namenode 或者 hdfs --daemon start namenode

5. 在其中一个namenode上初始化 hdfs zkfc -formatZK

6. 停止上面节点:stop-dfs.sh

7. 全面启动:start-all.sh

8. 启动resourcemanager节点

yarn-daemon.sh start resourcemanager 或者 start-yarn.sh

http://dl.bintray.com/sequenceiq/sequenceiq-bin/hadoop-native-64-2.5.0.tar

不需要执行第 8 步

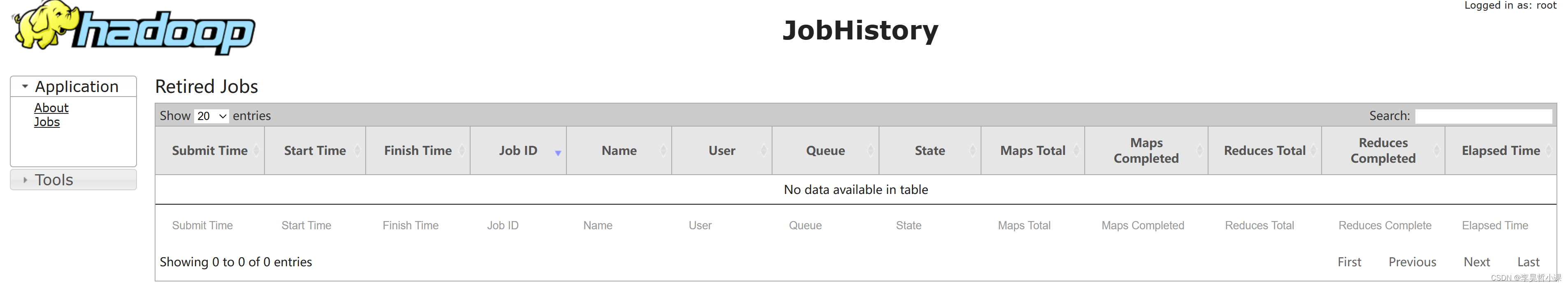

9. 启动历史服务

mapred --daemon start historyserver

10 11 12 不需要执行

10、安全模式

hdfs dfsadmin -safemode enter

hdfs dfsadmin -safemode leave

11、查看哪些节点是namenodes并获取其状态

hdfs getconf -namenodes

hdfs haadmin -getServiceState nn1

hdfs haadmin -getServiceState nn2

12、强制切换状态

hdfs haadmin -transitionToActive --forcemanual spark01

重点提示:

# 关机之前 依关闭服务 stop-yarn.sh stop-dfs.sh # 开机后 依次开启服务 start-dfs.sh start-yarn.sh

或者

# 关机之前关闭服务 stop-all.sh # 开机后开启服务 start-all.sh

#jps 检查进程正常后开启胡哦关闭在再做其它操作

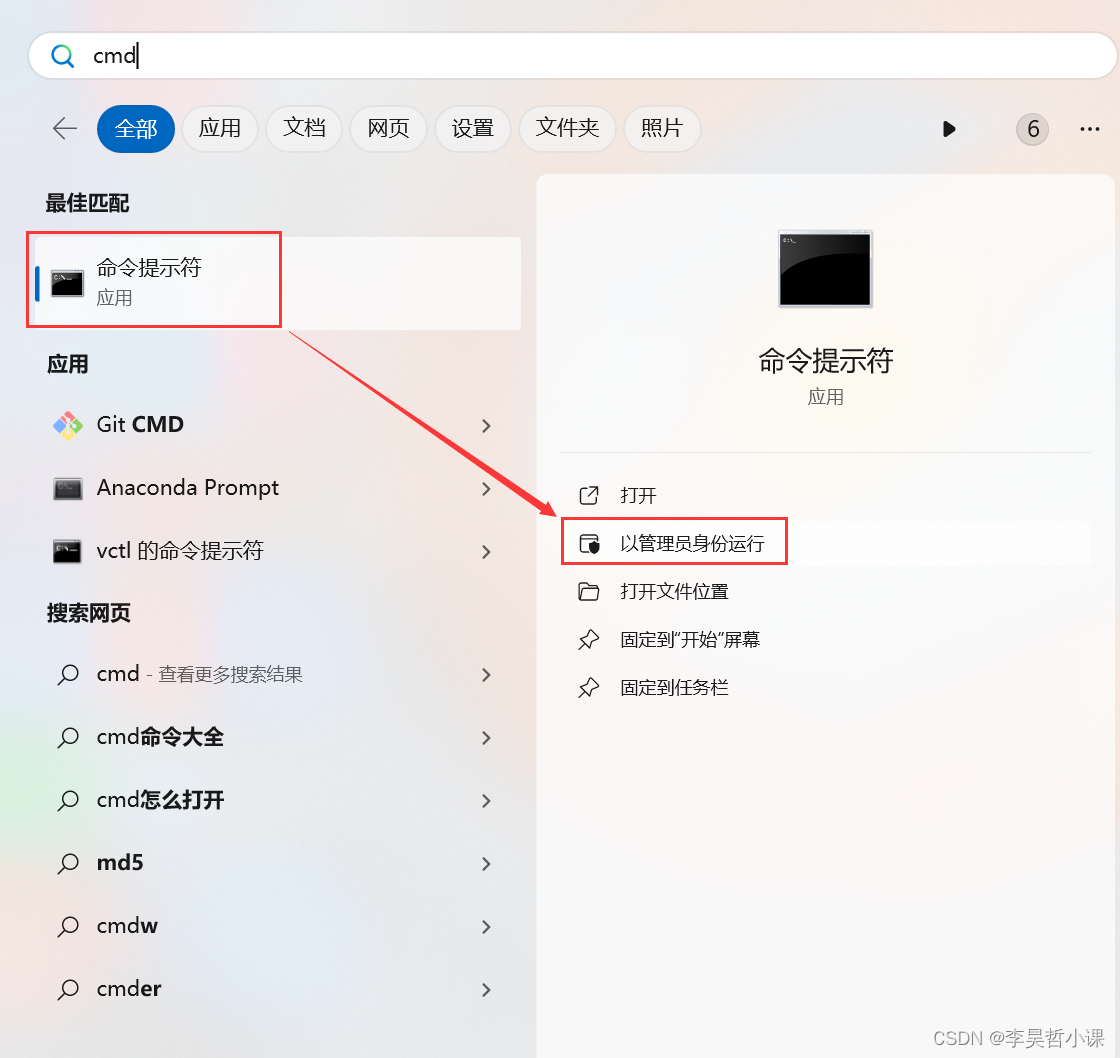

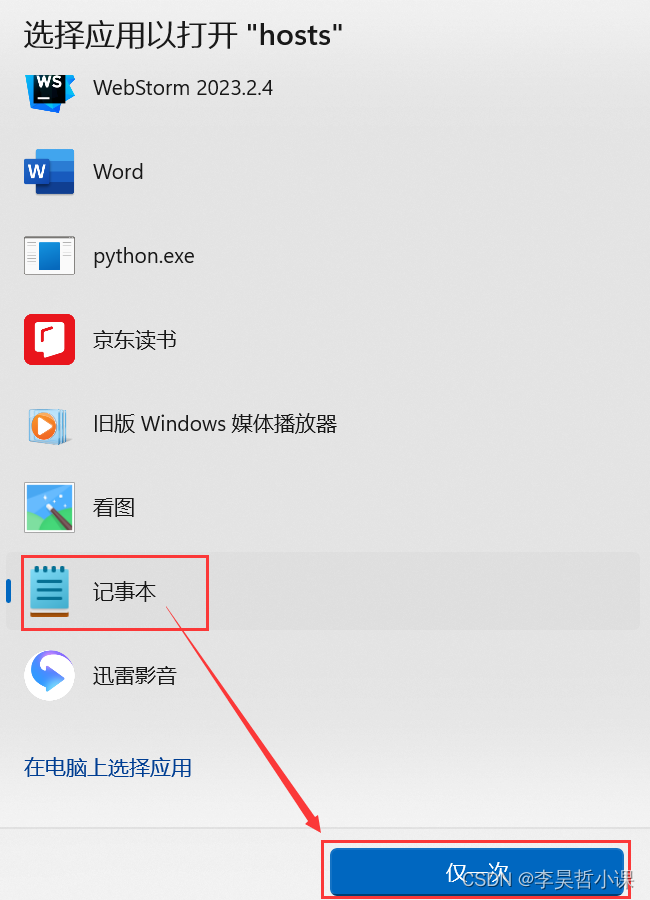

9. 修改windows下hosts文件

C:\Windows\System32\drivers\etc\hosts

追加以下内容:

192.168.171.101 hadoop101 192.168.171.102 hadoop102 192.168.171.103 hadoop103

Windows11 注意 修改权限

-

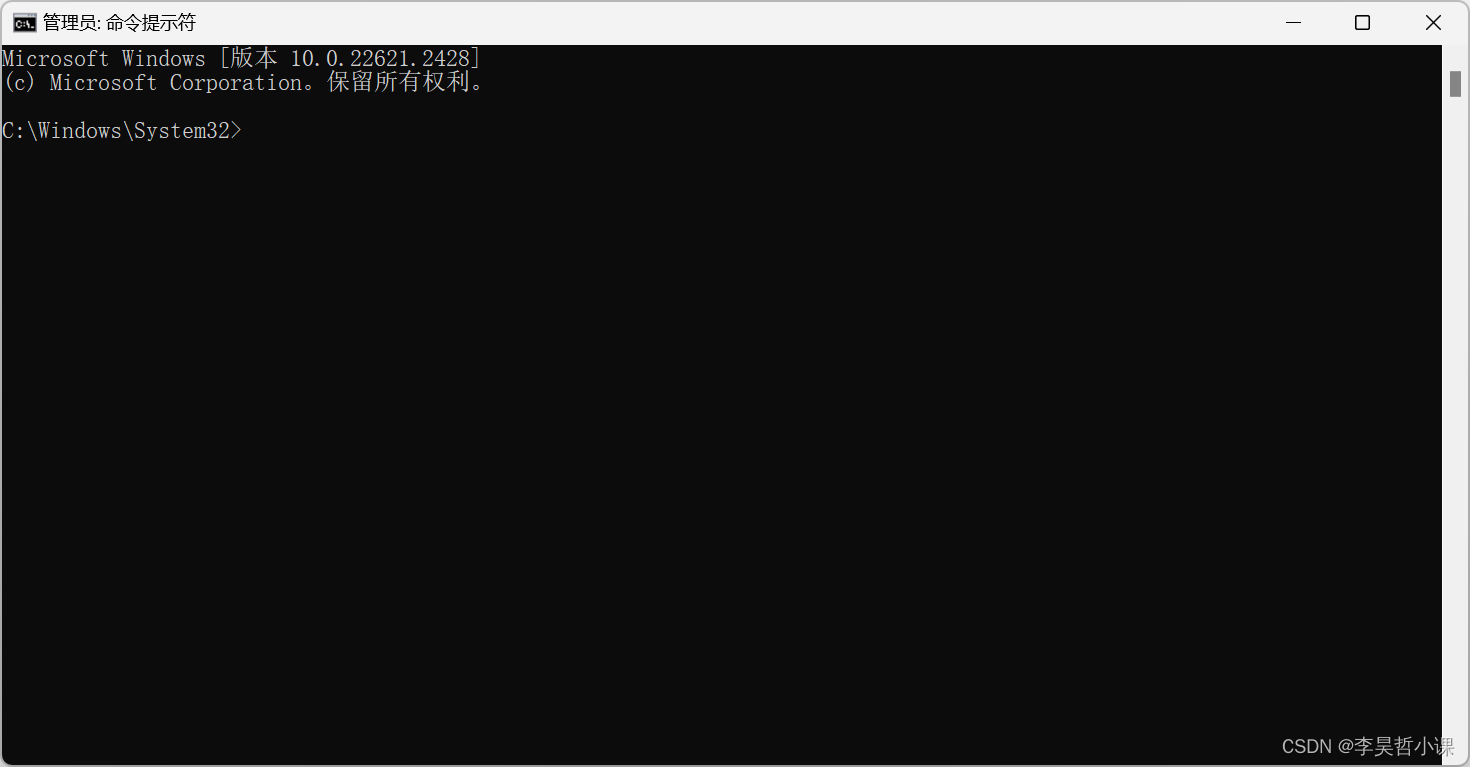

开始搜索 cmd

找到命令头提示符 以管理身份运行

-

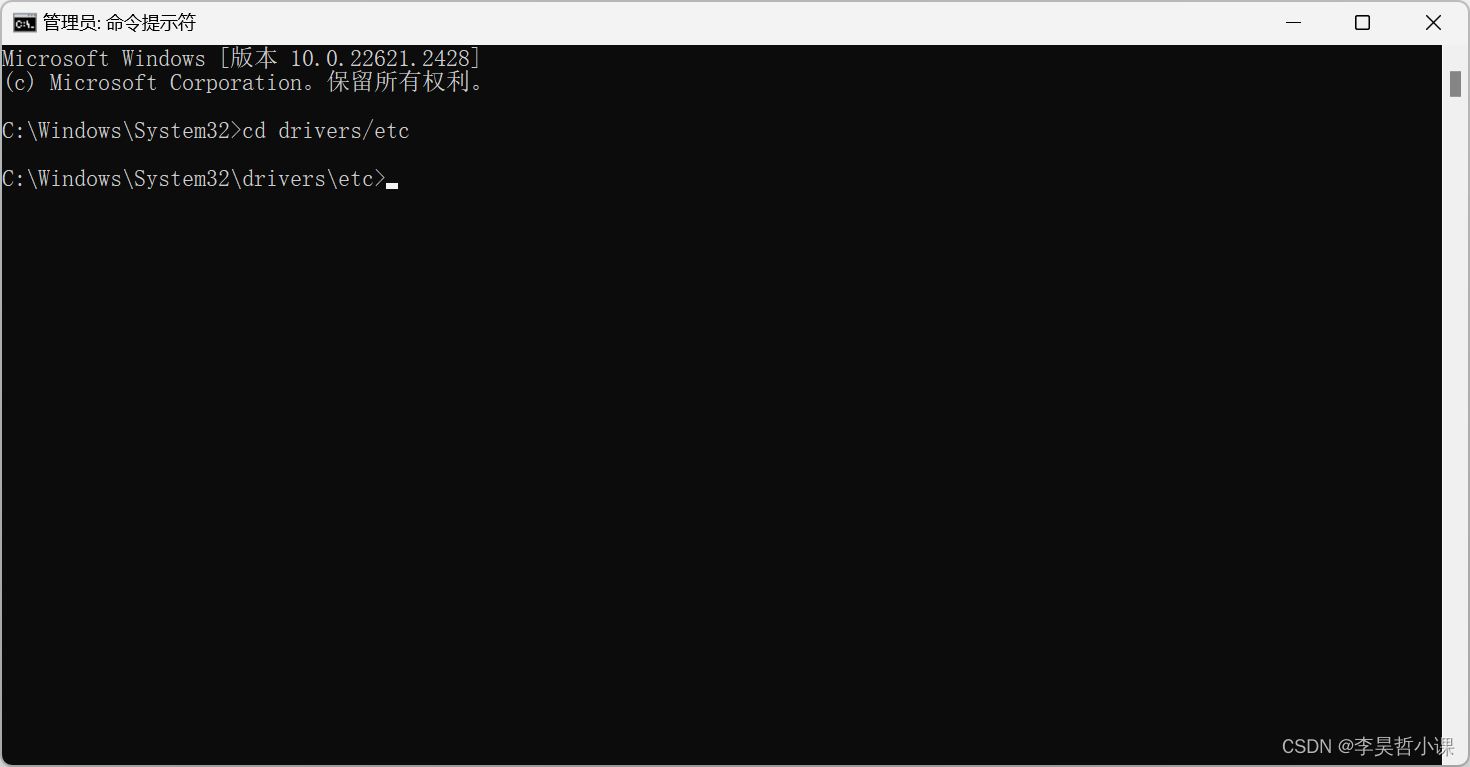

进入 C:\Windows\System32\drivers\etc 目录

cd drivers/etc

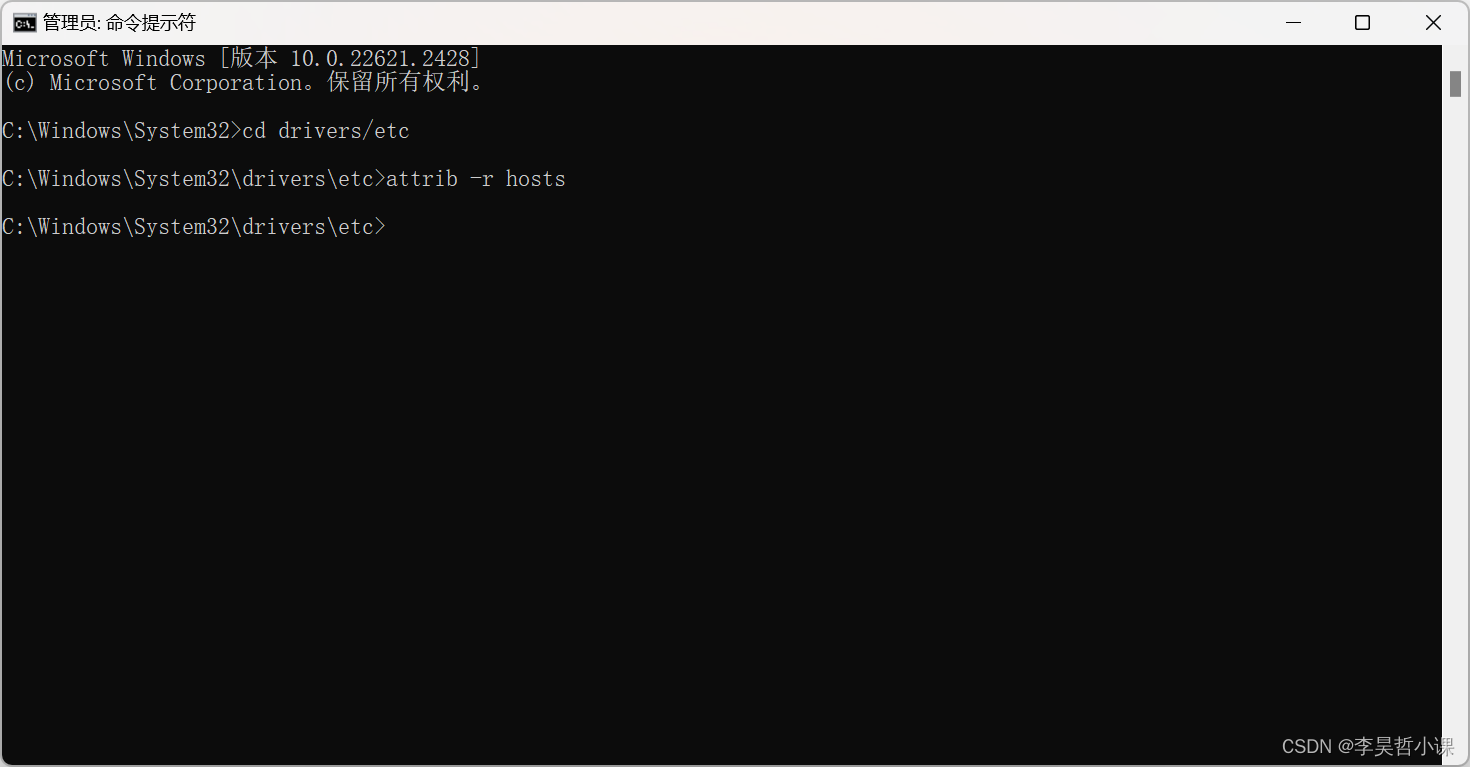

-

去掉 hosts文件只读属性

attrib -r hosts

-

打开 hosts 配置文件

start hosts

-

追加以下内容后保存

192.168.171.101 spark01 192.168.171.102 spark02 192.168.171.103 spark03

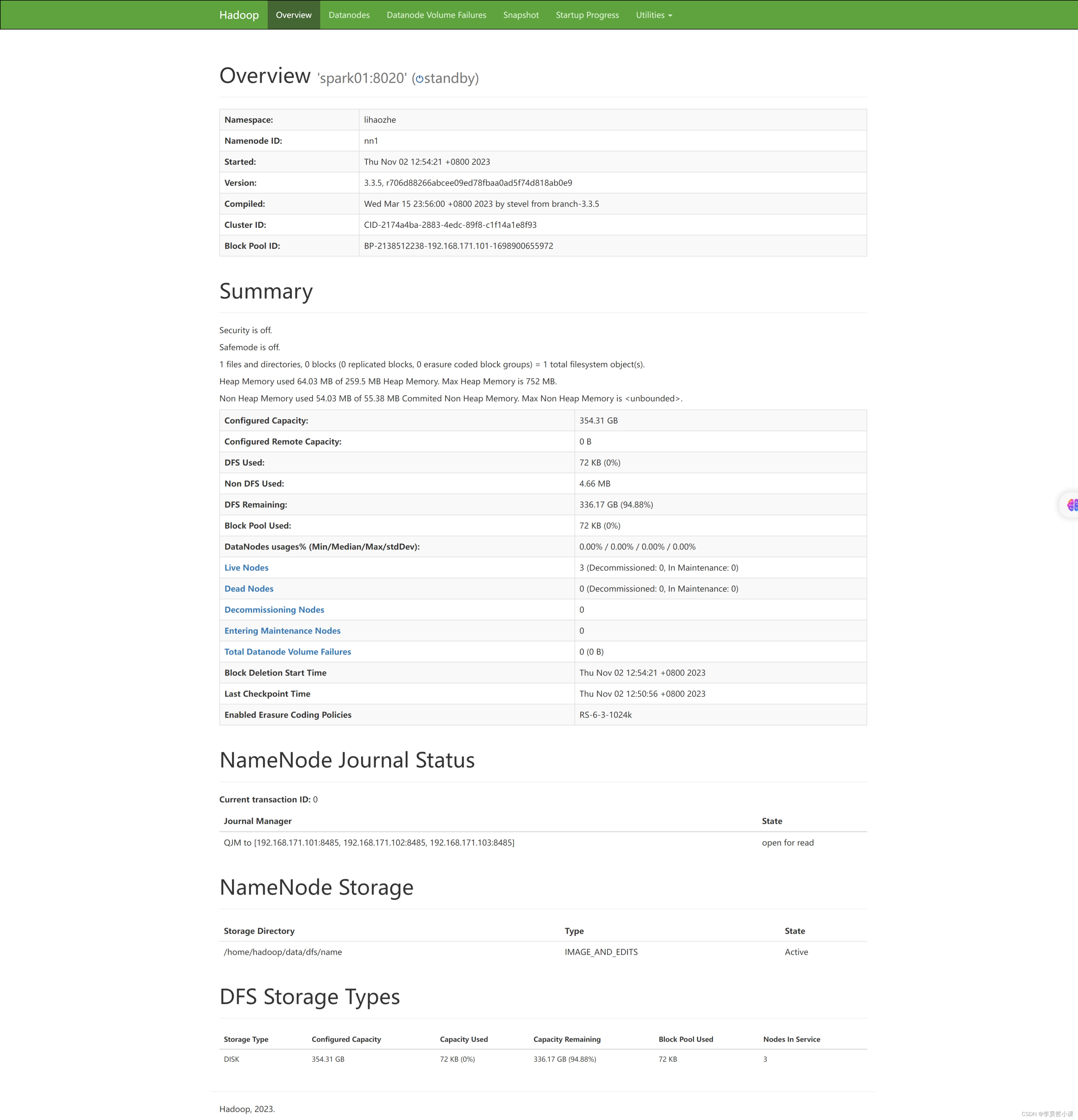

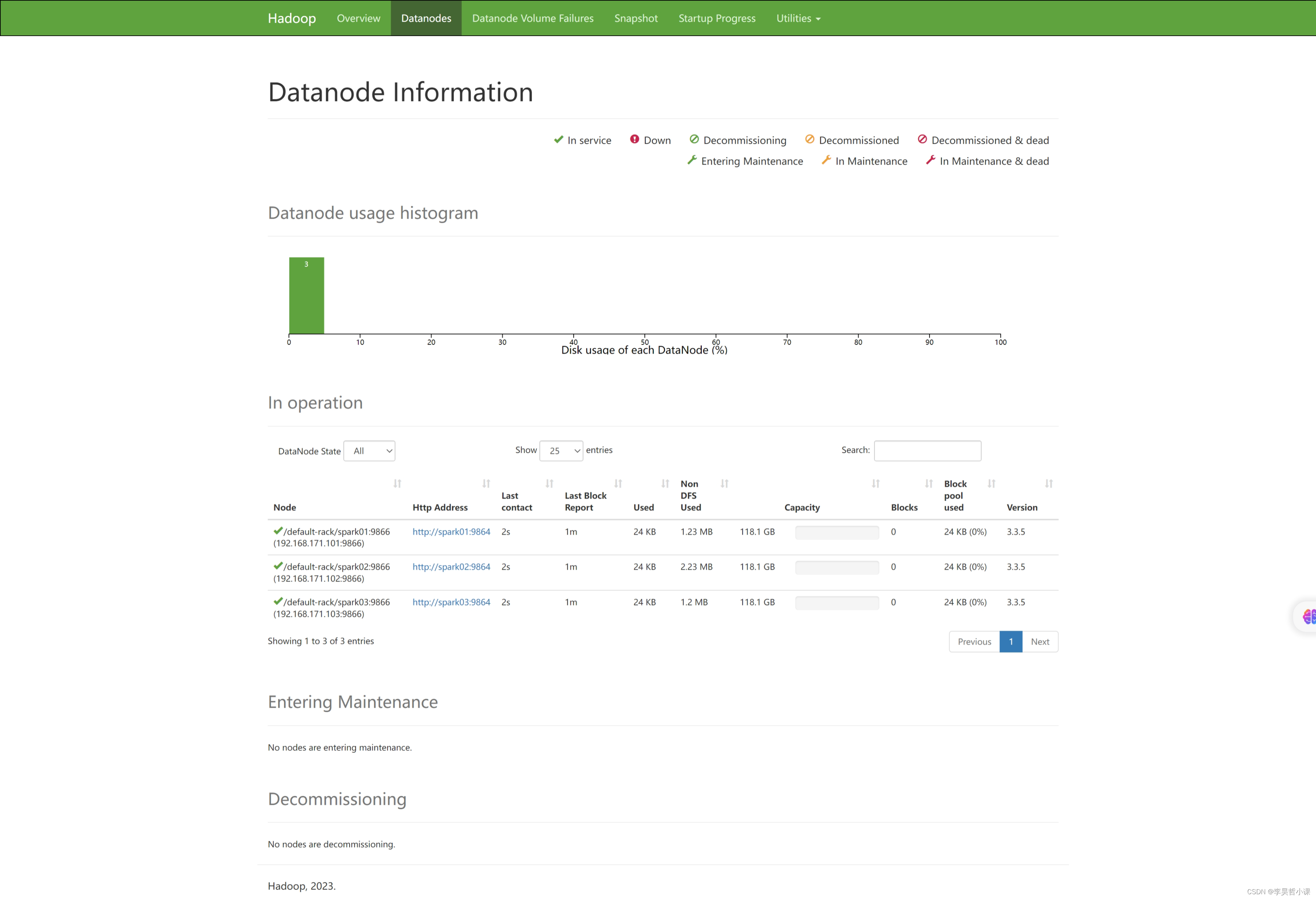

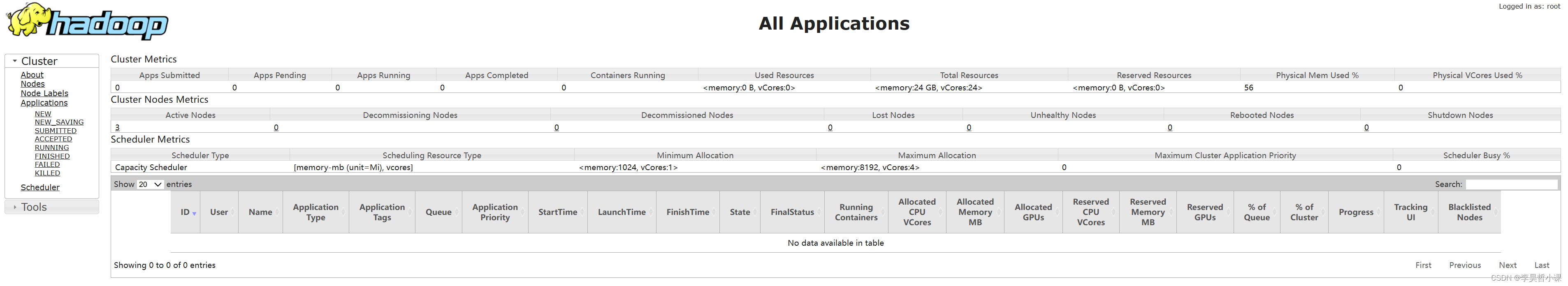

10. 测试

12.1 浏览器访问hadoop集群

浏览器访问: http://spark01:9870

浏览器访问:http://spark01:8088

浏览器访问:http://spark01:19888/

12.2 测试 hdfs

本地文件系统创建 测试文件 wcdata.txt

vim wcdata.txt

Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase HiveHadoop Spark HBase StormHBase Hadoop Hive FlinkHBase Flink Hive StormHive Flink HadoopHBase Hive Spark HBaseHive Flink Storm Hadoop HBase SparkFlinkHBase StormHBase Hadoop Hive

在 HDFS 上创建目录 /wordcount/input

hdfs dfs -mkdir -p /wordcount/input

查看 HDFS 目录结构

hdfs dfs -ls /

hdfs dfs -ls /wordcount

hdfs dfs -ls /wordcount/input

上传本地测试文件 wcdata.txt 到 HDFS 上 /wordcount/input

hdfs dfs -put wcdata.txt /wordcount/input

检查文件是否上传成功

hdfs dfs -ls /wordcount/input

hdfs dfs -cat /wordcount/input/wcdata.txt

12.2 测试 mapreduce

计算 PI 的值

hadoop jar $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.5.jar pi 10 10

单词统计

hadoop jar $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.5.jar wordcount /wordcount/input/wcdata.txt /wordcount/result

hdfs dfs -ls /wordcount/result

hdfs dfs -cat /wordcount/result/part-r-00000

11. 元数据

hadoop101

cd /home/hadoop_data/dfs/name/current

ls

看到如下内容:

edits_0000000000000000001-0000000000000000009 edits_inprogress_0000000000000000299 fsimage_0000000000000000298 VERSION edits_0000000000000000010-0000000000000000011 fsimage_0000000000000000011 fsimage_0000000000000000298.md5 edits_0000000000000000012-0000000000000000298 fsimage_0000000000000000011.md5 seen_txid

查看fsimage

hdfs oiv -p XML -i fsimage_0000000000000000011

将元数据内容按照指定格式读取后写入到新文件中

hdfs oiv -p XML -i fsimage_0000000000000000011 -o /opt/soft/fsimage.xml

查看edits

将元数据内容按照指定格式读取后写入到新文件中

hdfs oev -p XML -i edits_inprogress_0000000000000000299 -o /opt/soft/edit.xml

猜你喜欢

网友评论

- 搜索

- 最新文章

- 热门文章